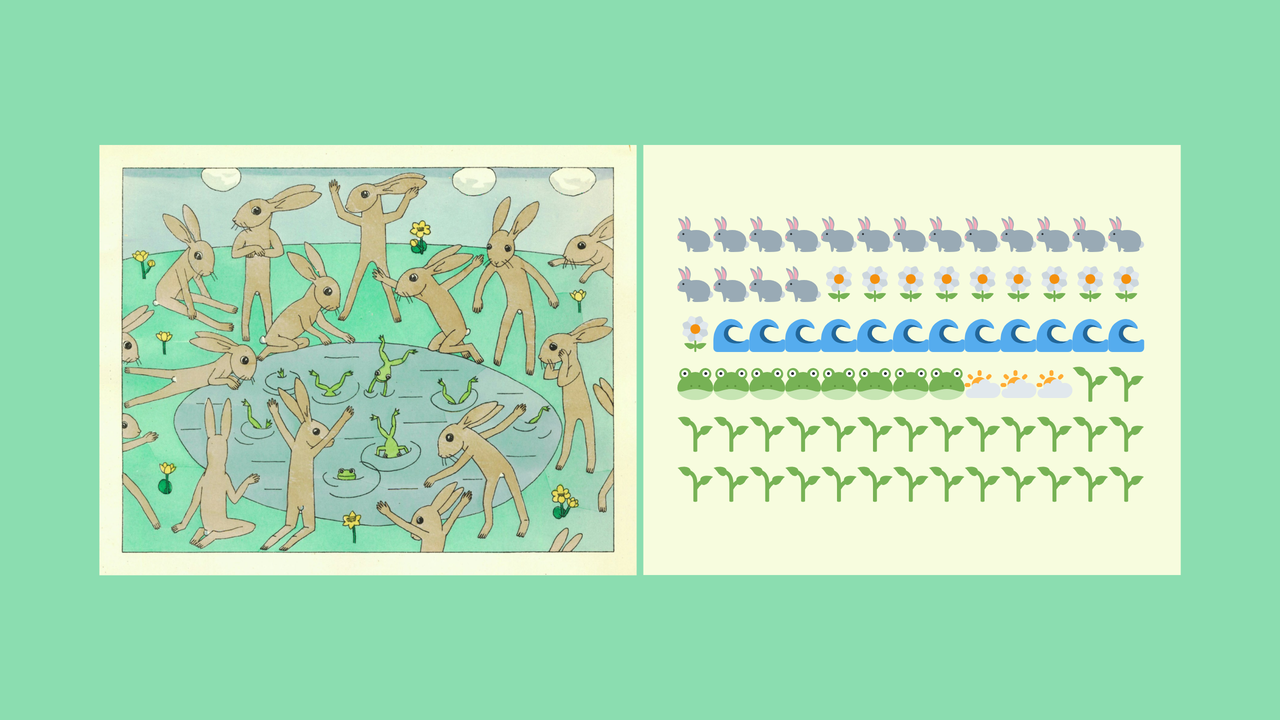

Image attribution: Source: Dominika Čupková & Archival Images of AI + AIxDESIGN / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

Artists as Invisible Data Workers

In the rapidly expanding world of AI-generated art, millions of images are produced daily with prompts like “Ghiblify this” or “make it look like Pixar art.” Since the launch of text-to-image algorithmic models, more than 15 billion images have been produced. Most of these images are made across four models – DALL-E, Stable Diffusion, Midjourney, and Adobe Firefly. Yet none of the artists, whose hard work has served as training material for these models, receive any share of the profits made by these companies.

Behind every AI model’s ability to replicate these distinctive art styles lies an uncomfortable truth: artists have become unwitting data workers, and their life’s work is harvested and transformed into mere data points in massive datasets without their knowledge or consent.

What Dataset Harvesting Looks Like

“Data work” in AI training typically represents human annotators labelling images or content moderators reviewing outputs. But there’s another category of data worker that remains less discovered: the artists whose creative output becomes the foundation for these systems. Each careful decision regarding the colour, style, and texture of creative output represents countless hours of training and skilled labour – intricate artistic production that once defined cultural value and commanded premium prices, now reduced to free training data harvest without attribution.

LAION-5B, one of the biggest image datasets, with 5.85 billion images and a digital footprint of 220 terabytes, is only one such example. It is built on scraping essentially every brush stroke put out by multiple artists worldwide and turning it into input data for AI models. It is also important to note that one of the sponsors of the dataset is Stability, the creator of Stable Diffusion, an image generation model with over 10 million users creating 2 million images daily in 2023.

This work feeds into AI systems through systematic data harvesting, under the guise of “fair use”. Web scraping operations crawl through art platforms, social media, and digital galleries, collecting millions of images without explicit permission. These automated systems don’t distinguish between a quick sketch and a master’s lifework—both become training data points in massive datasets.

Perhaps more unknowingly, users themselves create a feedback loop that reinforces this extraction. When users request “Ghibli-style” artwork, they’re essentially providing free labelling services, teaching models to associate specific visual elements with particular artists’ names. Each prompt becomes a data annotation session, further cementing the connection between artistic styles and their creators in the AI’s neural pathways. Moreover, these interactions continuously refine the models through user feedback. When users rate outputs or iterate on prompts, they’re providing additional training data that makes the replication of artistic styles even more precise, through a process called reinforcement learning from human feedback (RLHF).

Case Study: Miyazaki as a Data Worker

Hayao Miyazaki, one of the greatest animators of our time, is known for his intricately crafted films such as Spirited Away, Howl’s Moving Castle, and My Neighbour Totoro. Over decades of practice, he has developed a distinctive and widely recognised art style. Therefore, it is concerning to witness that his decades of stylistic consistency inadvertently created one of the most comprehensive ‘style datasets’ available to AI systems.

Last year’s trend of “Ghiblification” highlights this issue, as millions of users, including politicians and celebrities, demonstrated how years of meticulous craftsmanship can be distilled into algorithmic patterns that produce cheap gimmicks. Notably, the trend started at ChatGPT’s launch event, demonstrating the image capabilities of their latest model, and resulted in ChatGPT gaining over a million users within one hour, as well as ‘melting their GPUs’, as per Sam Altman. The irony here is profound, as Miyazaki is famously known for despising the use of technology in creating media.

The Changing Value of Human Creativity

The difference between traditional data workers and artists brings to light a troubling inequity in how different forms of AI labour are valued. Data workers, irrespective of the type of task they perform, receive compensation for their work, however inadequate, for their contributions to AI systems. Artists, by contrast, find their life’s work harvested and transformed into training data without any form of compensation. This asymmetry highlights a critical gap in our understanding of what constitutes “work” in the age of AI.

This calls for urgent consideration of new frameworks that address both fair compensation for artists’ unwitting data labour and robust ownership protections that do not evaporate the moment work enters the public sphere. We need systems that preserve artists’ rights over how their publicly shared work gets used, particularly for commercial AI training. The question isn’t whether such protections are technically feasible, but whether we are willing to challenge an economic model that treats public sharing as forfeiting all future control over one’s creative output.

Conclusion

As AI art floods our feeds, each click, each prompt chips away at the ornate labour of creators. We have seen how billions of images slip into training sets – unpaid, uncredited, unconsented – thus exploited. Various kinds of artists (SAG-AFTRA Strike) are becoming unwitting contributors to AI systems that can replicate their life’s work with a simple prompt, while tech companies profit from decades of human creativity without sharing any returns.

This raises a fundamental question: if artists have become unwitting data workers in the AI economy, do they not deserve both meaningful control and fair compensation over their creative expressions?