Image sourced by unsplash.com

Balancing the promises and perils of Artificial Intelligence in healthcare

Artificial intelligence (AI) has the potential to significantly augment diagnostic capabilities with predictive AI tools aiding in the detection of diseases, access to curated knowledge, treatment routes and even patient monitoring systems. However, this growing industry also comes with its own challenges and risks. AI-backed and enabled healthcare systems are a potential site for biases in such systems.

These biases can exacerbate existing health disparities among different patient groups and communities across race, ethnicity, religion, gender, disability, or sexual orientation. When underrepresented groups are subjected to algorithmic predictions, the possibility of less accurate results emerges, often underestimating health and care needs. Such inaccuracies in diagnosis, treatment, and patient monitoring tend to be risky in high-stakes health cases, have the potential to erode patient trust, and lead to legal and ethical violations of patient rights. With the emergence of such concerns, a surge of efforts to govern AI in healthcare has already been put in place, attempting to mitigate risks associated with bias. To meaningfully mitigate these biases, identifying how different sources of bias in AI systems negatively impact patient care is critical. This requires closely examining how such biases emerge and accumulate across the complex, multi-actor processes that shape AI development and deployment.

Understanding bias in Health AI

Bias has often been thought of as a function of statistical or computational errors resulting in inaccurate results; however, experts have expanded this understanding by shedding light on how cognitive human biases are encoded into AI systems. Social, political and cultural situations where AI systems are built, as well as actors and institutions, interact and influence the development and deployment of AI systems, encoding societal and individual cognitive biases like prejudices and stereotypes within such systems. Our research has been able to identify three key sources. We look at these sources of bias and discern how they produce harmful outcomes in the health sector,

- Pre-existing Bias: Such biases exist independently of the technology, however, they often influence its development. Biases in human decision-making or societal biases reflected in the data make their way into AI systems.

Example: Pre-existing gender bias in health gets entrenched in AI: Historically, women’s participation in clinical trials has been low, resulting in limited researchon how diseases are identified and their impact on women. Understanding symptoms specific to the female physiology and risk indicators emerges as a key challenge here. AI models trained on such historical datasets can produce gender biases, increasing risks of misdiagnosis and delayed treatment. - Technical Bias: These biases arise from the design decisions made in the development of a technology. Biases in datasets arising out of non-representative sampling of demographics or model architectures, which ineffectively characterise data, can produce biased results.

Example: Technical choices in training and tuning AI models can introduce societal or racial bias:AI models often use proxies to determine care needs or predict treatment needs. However, proxies like health expenditure used to determine critical care needs don’t account for structural inequalities. This results in a skewed understanding of communities or groups of people who spend less on health being predicted to need less critical care. This further entrenches such biases and exacerbates existing challenges faced by such communities. - Emergent Bias: Biases can also occur in the adoption of technologies. These biases refer to instances where the training environment of the AI does not reflect its implementation environment, or even biases which are produced as users interact with AI systems, producing feedback loops.

Example: Adopting AI models from one context to another can miss the local context, resulting in ha to patients: The push for AI adoption in healthcare has led to the adoption of AI across Global South countries. However, AI models developed in the global north, when applied in the Global South context, miss local epidemiological realities and lack cultural competence, resulting in misdiagnosis and ineffective treatment.

Mapping and Mitigating Bias in the Healthcare Value Chain

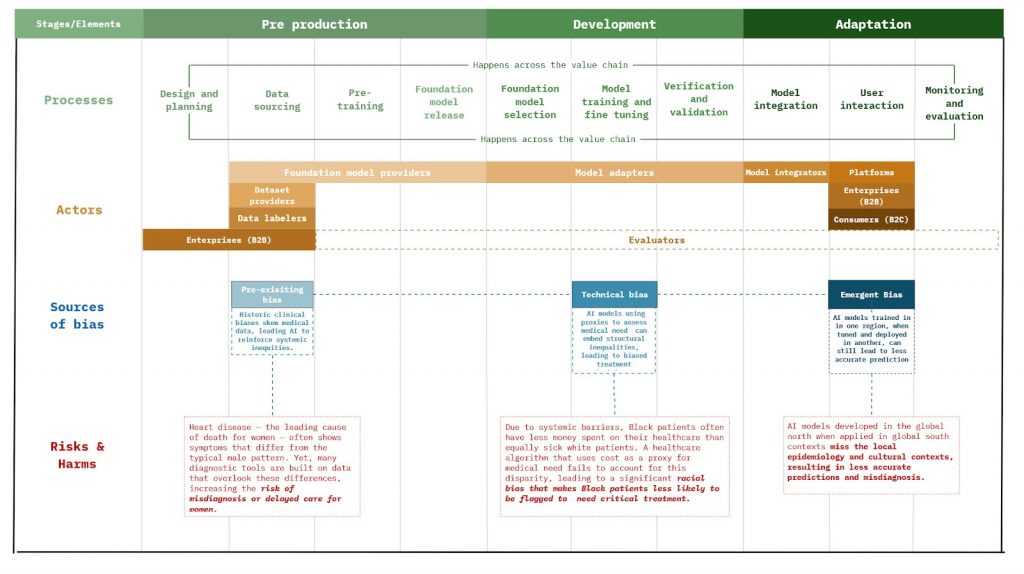

While recognising the various sources of bias and how they produce risky and harmful AI behaviour is an important first step. To effectively address these biases, we must examine where and how they get entrenched in the development lifecycle of AI. Our research at Aapti shows that adopting a value chain ontology can be an effective way of understanding this issue.

A value chain ontology breaks down the development and deployment of AI systems into interconnected networks of processes and actors. We can look at AI development and deployment across three phases– pre-development, deployment and adaptation. Each phase of the value chain contains specific processes and various actors that perform or influence these processes.

Using this ontological framework, we can further identify where different sources of bias can affect the development process. Below, we map the various pain points across the AI value chain where the different sources of bias can arise, and additionally, how these biases manifest risks and harm in the context of the health sector.

This mapping allows us to investigate the interaction of the sources of bias and the different processes part of the development cycle of AI systems. Furthermore, it also enables us to locate the accountability and responsibilities of actors influencing these processes. A broader mapping can thus enable us to build robust Responsible AI tools, principles and frameworks, which are stage-specific and checks and treats for AI systems for bias across the entire AI value chain.

To this end, our researchers from Aapti looking at bias within the health sector and beyond have proposed stage adaptive and actor-specific thinking of fairness across the AI value chain, further deep diving into how this orientation towards treatment of bias can play a role in building fair and responsible AI models and systems.